Compare and Contrast

Variability across influenza studies makes it difficult to compare datasets – could a new algorithm bridge the gap?

Reproducibility poses a significant challenge to biology researchers. Some results align across labs while others will strongly disagree, leading teams to work in silos – analyzing their data without leveraging previous research.

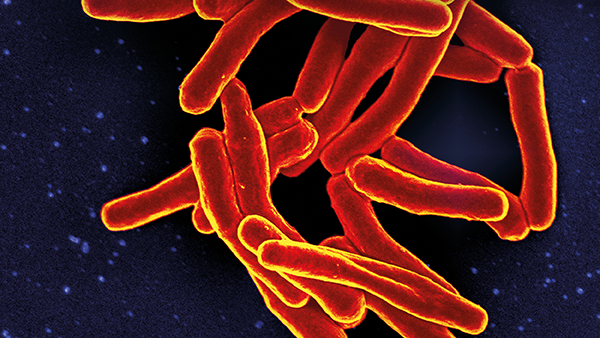

“Using other datasets is tricky,” agrees Tal Einav, Assistant Professor at La Jolla Institute for Immunology, California, USA. “For example, suppose we measure the antibody response in adults after they receive the flu vaccine this year. Should we compare it to the antibody response in children, or might children produce a fundamentally different response? What about adults from a study conducted last year or five years ago? We know that antibody responses change over time, but might there be some patterns in those old data that we can still use today?”

Before even trying to answer those questions, Einav had to overcome a pretty big hurdle, explaining that some antibody-virus interactions he predicted across different datasets were “highly accurate, while others were terrible.” Differentiating between these two cases became his top priority. “I quickly decided that I needed to estimate the confidence of each prediction,” says Einav. “That way, even if we cannot predict everything perfectly, we can focus on the highly trustworthy predictions and not be fooled by the uncertain ones.”

In such scenarios, Einav’s go-to trick is to leverage the fact that science is best done as a community: “I seek out experts who have solved a related problem and can point me in the right direction.” He reached out to Rong Ma, now a professor at Harvard University, who told him there were very few algorithms that can estimate the confidence of each prediction. “Each one we tried worked poorly because they were designed to predict values within a single dataset rather than across different datasets,” Einav says.

Down but not out, Einav and Ma regrouped and decided that, if no other algorithm would do the trick, they would create their own.

“As it turned out, our skillsets were exactly what was needed for this problem, and we broke through the problem within weeks,” explains Einav. “We ended up with a very general framework that can be used to predict between any datasets that measure two-body interactions; for example, how well drugs inhibit different cancer cell lines, the downstream signal from proteins composed of different subunits, or even how much people will enjoy different movies.”

Whether it’s using different variants or measuring antibody responses at different time points, influenza studies all differ from one another at least slightly – making direct comparison quite difficult, but the current study helps to overcome this challenge. “Our algorithm lets us precisely quantify how well information can be transferred across studies and determine what features of the antibody response (a person’s age, location, infection history) really matter.”

Explaining how the algorithm works, Einav says, “We first withhold a fraction of the data from one study and use all other studies to predict those withheld values. When two datasets are very similar, we find excellent predictions; when two datasets fundamentally differ, the predictions are far worse. Applying this reasoning in reverse, these predictions let us quantify the similarity between datasets.”

With a new algorithm in hand, what did they find? “First, we showed that antibody responses are highly degenerate – if you look at the responses from 10,000 different people, you will only find several dozen different types of responses. So, instead of everyone having a completely unique antibody response, there are a few set patterns, and this makes predictions possible,” says Einav. “We also found that vaccine responses tend to be highly similar across space (responses from the US predicted those from Australia) and across time (antibody responses measured in 2000 predicted those from 2010). This suggests that we should be combining all of these datasets together when we train computational models to encompass far more diverse antibody responses.”

With this newfound ability to conduct head-to-head comparisons, the study could have implications for preparing for – and responding to – future flu outbreaks. “If a new variant emerges this year that circumvents antibody immunity in young adults, we can leverage other studies examining children or the elderly to quantify whether they will also be at risk,” says Einav. “The lessons learned in one study can be immediately applied to all studies.”

Next on the agenda for Einav’s research is to study the factors that contribute to influenza antibody response over time – what makes a long- versus short-term response? How can the influenza vaccine be optimally designed? These are the questions Einav still wants to answer – and that work could lead to a longer lasting, universal influenza vaccine.

Reference

- T Einav, R Ma, “Using interpretable machine learning to extend heterogeneous antibody-virus datasets,” Cell Rep Methods, 3, 100540 (2023).